Deploy Alluxio on Azure Cobalt 100 Arm64 virtual machines for data orchestration and caching

Introduction

Understand Alluxio on Azure Cobalt 100

Create an Azure Cobalt 100 Arm64 virtual machine

Allow access to the Alluxio Web UI on Azure

Deploy Alluxio on Azure Cobalt 100

Integrate Alluxio with Apache Spark and optimize performance

Next Steps

Deploy Alluxio on Azure Cobalt 100 Arm64 virtual machines for data orchestration and caching

Set up Apache Spark with Alluxio

In this section, you’ll connect Apache Spark to Alluxio and enable in-memory caching.

Without caching, Spark re-reads data from storage on each pass. With Alluxio in the path, frequently accessed data can stay in memory, which reduces repeated storage reads.

You’ll then measure the difference between uncached and cached reads.

Install Apache Spark

Download Apache Spark, extract it, and place it under /opt:

cd ~

wget https://archive.apache.org/dist/spark/spark-3.4.2/spark-3.4.2-bin-hadoop3.tgz

tar -xvzf spark-3.4.2-bin-hadoop3.tgz

sudo mv spark-3.4.2-bin-hadoop3 /opt/spark

sudo chown -R $USER:$USER /opt/spark

Configure Spark environment

Set the Spark environment variables so you can run Spark commands from your shell:

echo 'export SPARK_HOME=/opt/spark' >> ~/.bashrc

echo 'export PATH=$PATH:$SPARK_HOME/bin' >> ~/.bashrc

source ~/.bashrc

Connect Spark with Alluxio

Open the Spark configuration file and add the Alluxio filesystem implementation and client JAR paths:

nano $SPARK_HOME/conf/spark-defaults.conf

spark.hadoop.fs.alluxio.impl=alluxio.hadoop.FileSystem

spark.driver.extraClassPath=/opt/alluxio/client/alluxio-2.9.4-client.jar

spark.executor.extraClassPath=/opt/alluxio/client/alluxio-2.9.4-client.jar

These properties register Alluxio’s Hadoop-compatible filesystem implementation so Spark can resolve alluxio:// URIs. They also add the Alluxio client JAR to both the driver and executor classpaths.

Create a dataset

Create a sample dataset in /mnt/data/demo. Files placed in /mnt/data are accessible to Spark through the alluxio:/// URI prefix because /mnt/data is configured as Alluxio’s root underlying file system:

rm -rf /mnt/data/demo

mkdir -p /mnt/data/demo

The following loop generates 100,000 records, creating a small but representative dataset for the caching benchmark:

for i in {1..100000}; do

echo "record $i - alluxio spark learning" >> /mnt/data/demo/data.txt

done

Verify the file was created successfully:

wc -l /mnt/data/demo/data.txt

The output is similar to:

100000 /mnt/data/demo/data.txt

Start the Spark shell

Start the interactive Spark shell. This opens a Scala REPL with a pre-configured SparkSession available as spark. All commands in the following sections run inside this shell:

spark-shell

The output is similar to:

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.4.2

/_/

Using Scala version 2.12.17 (OpenJDK 64-Bit Server VM, Java 11.0.30)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

Load data via Alluxio

Load the sample dataset through the Alluxio namespace and confirm that Spark can read it successfully:

val df = spark.read.text("alluxio:///demo/data.txt")

df.count()

The expected output is:

100000

Enable caching

df.cache() marks the DataFrame for Spark’s in-memory caching. The subsequent df.count() triggers a full read, loading the data from Alluxio into Spark’s cache. After this step, repeat reads on df are served from Spark’s in-memory cache rather than going back through Alluxio:

df.cache()

df.count()

Measure performance

Run for the first time:

val t1 = System.nanoTime()

df.count()

val t2 = System.nanoTime()

println((t2 - t1)/1e9 + " seconds")

Run for the second time after caching:

val t3 = System.nanoTime()

df.count()

val t4 = System.nanoTime()

println((t4 - t3)/1e9 + " seconds")

Run both timing blocks and compare the printed values. The output is similar to:

0.44 seconds

0.39 seconds

The second run is faster because Spark serves the result directly from its in-memory cache. Spark bypasses Alluxio and the underlying storage entirely.

Verify in Alluxio UI

Open the Alluxio UI. Replace <VM-IP> with the public IP of your VM:

http://<VM-IP>:19999

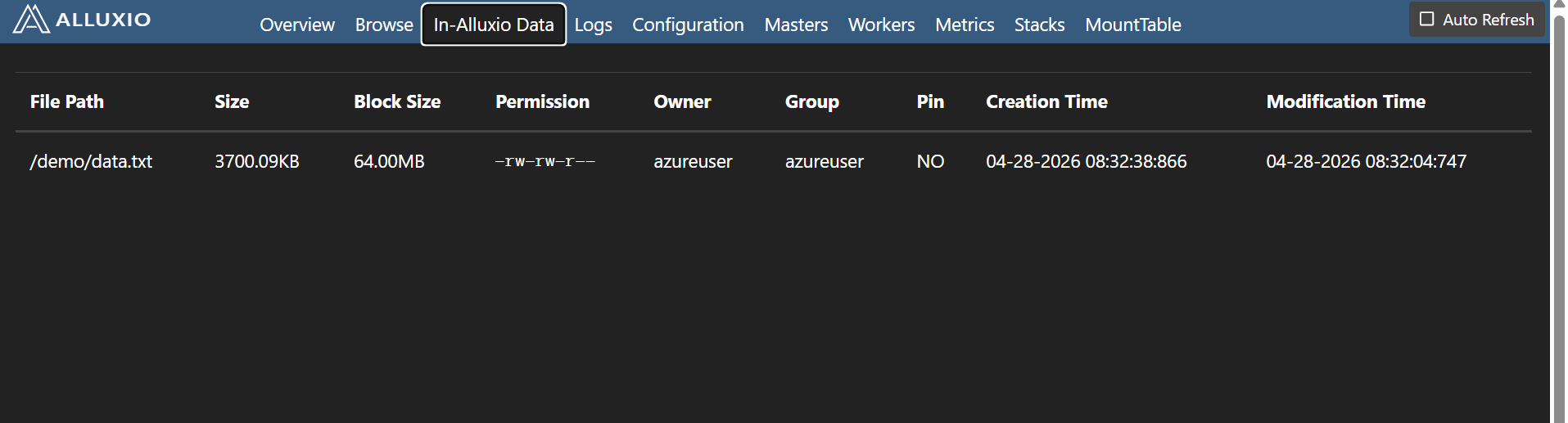

Alluxio cluster load and worker utilization during processing

Alluxio cluster load and worker utilization during processing

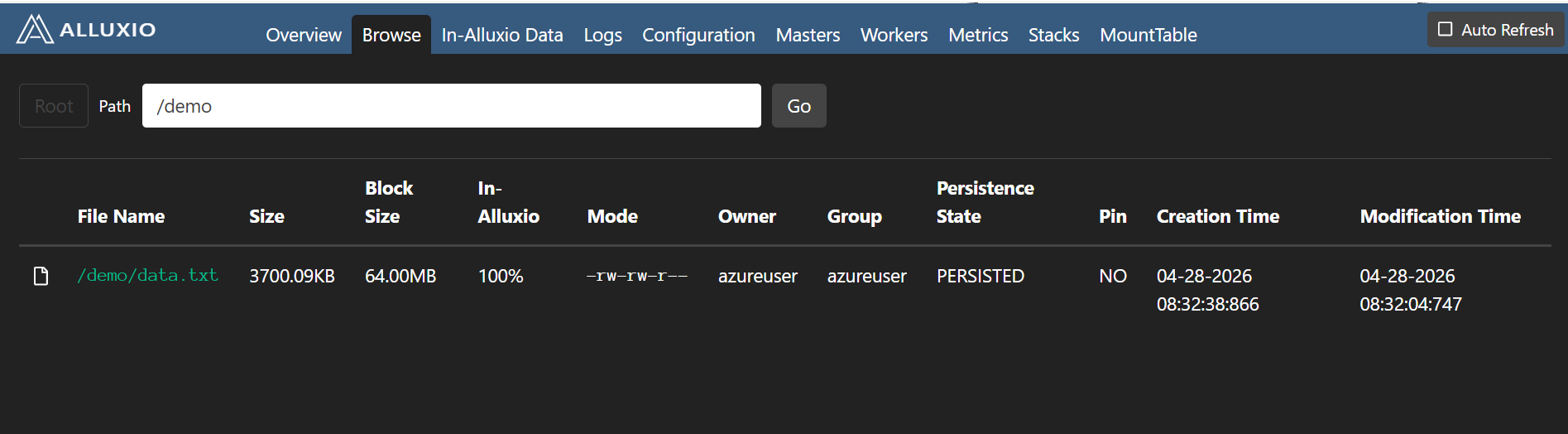

Alluxio data view displaying cached datasets

Alluxio data view displaying cached datasets

The UI shows files stored in Alluxio namespace. You can see cached files and directories available for fast access.

In the Alluxio Web UI, confirm the following:

- Increased worker memory usage in the worker summary

- Cached file blocks listed in the data browser

- Active data access reflected in the cluster metrics

What you’ve accomplished

You’ve now connected Apache Spark to Alluxio on an Azure Cobalt 100 Arm64 VM and loaded data through the Alluxio namespace. You measured the difference between an uncached and a cached read. You then verified the caching activity in the Alluxio Web UI, where worker memory usage increases and cached file blocks became visible after the first read.

To see the full performance benefit of Alluxio, you can replace the local disk UFS with a remote storage backend such as Azure Blob Storage. In that configuration, Alluxio caches data in local worker memory after the first read, eliminating repeated remote storage round-trips for subsequent Spark jobs.