Connect AI agents to edge devices using Device Connect and Strands

Introduction

Learn Device Connect and Strands architecture for edge devices

Set up the Device Connect and Strands developer environment

Run device discovery and agent control examples

Run with full Device Connect infrastructure (optional)

Next Steps

Connect AI agents to edge devices using Device Connect and Strands

Physical AI starts with connectivity

Arm processors are at the heart of a remarkable range of systems, from Cortex-M microcontrollers in industrial sensors to Neoverse servers running in the cloud. That breadth of hardware is one of Arm’s greatest strengths, but it raises a practical question for AI developers: how do you give an agent structured, safe access to devices that are physically distributed and built on different software stacks?

Natural language is becoming more than a software interface. Physical AI systems can now sense, decide, and act based on instructions from an LLM agent. But for that to work, the devices need to be reachable. They need a shared infrastructure for discovery, communication, and coordination.

Device Connect is Arm’s device-aware framework for exactly that. Once devices register through a shared mesh, agents can discover and command any of them without caring where they run. A fleet of robot arms, a network of sensors, or a mix of physical and simulated devices all become equally reachable.

Strands Robots is a robot SDK that integrates Device Connect with the AWS Strands Agents SDK . Using Strands, an LLM can query the device mesh (“who’s available?”), understand what each device can do, and dynamically invoke actions - turning natural language intent into real-world outcomes.

This Learning Path starts on a single machine, where a simulated robot and an agent discover each other automatically, then optionally extends to a Raspberry Pi joining the same device mesh over the network.

How the pieces fit together

Two packages make this work.

device-connect-sdk is the device-side runtime. Any process that wraps a robot in a DeviceDriver and starts a DeviceRuntime joins the mesh: it registers itself, announces what it can do, and starts publishing state events. Zenoh handles low-level connectivity; in device-to-device mode no broker or environment configuration is needed.

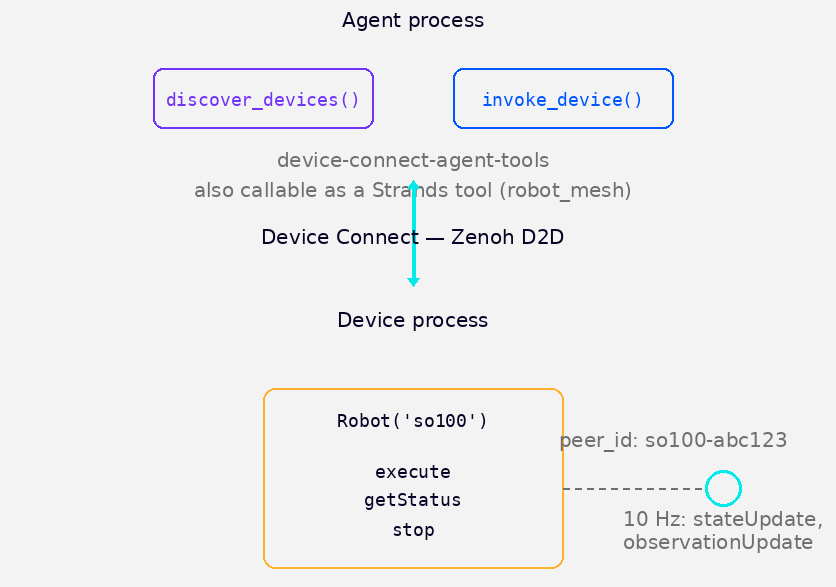

device-connect-agent-tools is the agent-side runtime. It exposes discover_devices() and invoke_device() as plain Python functions. The robot_mesh tool in Strands Robots wraps the same interface as a Strands tool, so an LLM can call it too. Both use the same underlying transport, so the same calls work whether the caller is a script or an agent.

Device Connect architecture: agent layer and device layer connected over Zenoh D2D

Device Connect architecture: agent layer and device layer connected over Zenoh D2D

What a device exposes

This Learning Path uses the SO-100 arm, an open-source robot arm. When Robot('so100').run() starts, it registers on the mesh and exposes three callable functions. These are what invoke_device() on the agent side targets — calling invoke_device("so100-abc123", "execute", {...}) routes a request over Zenoh to the robot process and executes the function there, returning the result back to the caller:

execute— send a natural language instruction and a policy provider to the robotgetStatus— query what the robot is currently doingstop— halt the current task, oremergency_stopto halt every device on the mesh at once

A motion policy is the component that translates a high-level instruction like “pick up the cube” into a sequence of joint movements. Different policy providers connect to different backends — from local model inference to remote policy servers. For this Learning Path, policy_provider='mock' is used, so execute accepts the task and returns immediately without running real motion. Replacing 'mock' with a real provider like 'lerobot_local' or 'groot' is a one-line change once you have the connectivity working.

Beyond discrete RPC calls, devices can also publish a continuous stream of sensor data over the same mesh. A camera publishes image frames, a depth sensor publishes point clouds, and an IMU reports pose updates — all as Device Connect events that any subscriber on the network receives in real time. The simulated robot in this Learning Path publishes joint state and observation updates at 10 Hz.

What you’ll learn in this Learning Path

By working through the remaining sections you’ll:

- Clone the sample repository and install the Device Connect SDK, agent tools, and Strands robot runtime from source into a single virtual environment.

- Start a simulated robot that registers itself on the local device mesh.

- Discover and invoke the robot using

device-connect-agent-toolsdirectly. - Discover and command the robot through the

robot_meshStrands tool, including an emergency stop. - Optionally extend the setup to a Raspberry Pi connected over the network, discovering and commanding it from your laptop through the Device Connect infrastructure.

The next section covers the environment setup.